Wir haben diesen Artikel auch auf Deutsch veröffentlicht.

Dylan smiles into the camera, arm in arm with the other guests at a LGBT party on a boat. Behind them, glasses glisten on the shelves of a bar. Eight years ago a party photographer uploaded this snapshot on the Internet. Dylan had already forgotten about it — until today. Dylan wants to keep his private and professional life separate: During the day he works as a banker in Frankfurt am Main. But with PimEyes, a reverse search engine for faces, everyone can find this old party photo of Dylan. All they have to do is upload his profile picture from the German networking platform Xing, free of charge and without registration.

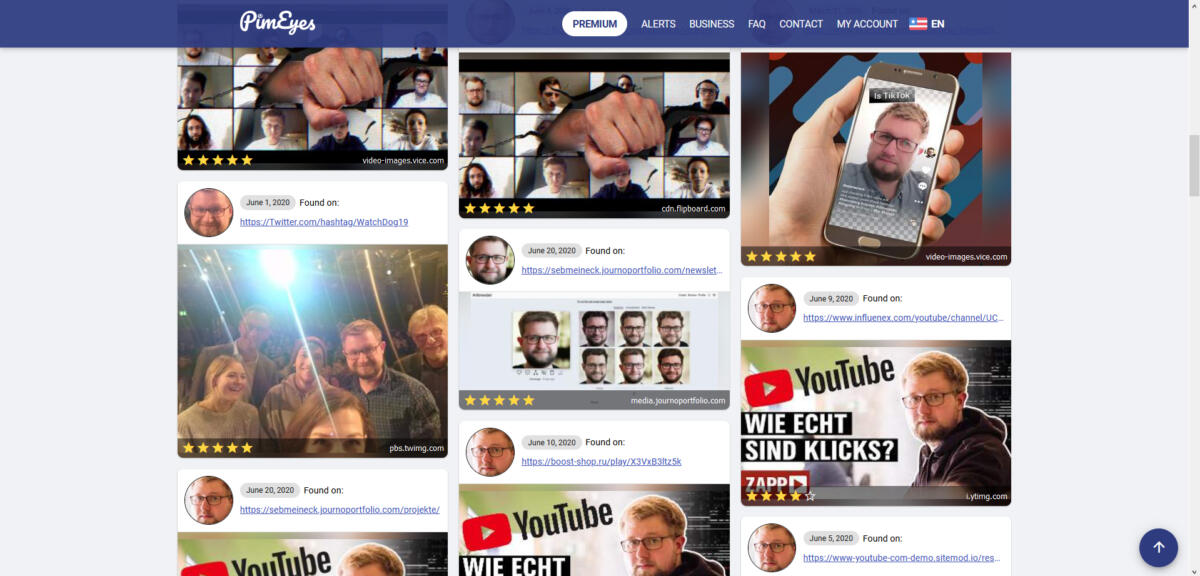

PimEyes analyses masses of faces on the Internet for individual characteristics and stores the biometric data. When Dylan tests the search engine with his profile picture, it compares it with the database and delivers matching faces as a result, showing a preview picture and the domain name, where the picture was found. Dylan was recognized even though, unlike today, he did not even have a beard.

Our investigation shows: PimEyes is a broad attack on anonymity and it is possibly illegal. A snapshot may be enough to identify a stranger using PimEyes. The search engine does not directly provide the name of a person you are looking for. It does however find matching faces, and in many cases the shown websites can be used to find out names, professions and much more.

Whoever shows their face in public can be recognised; whether at a demonstration, in front of the polling station or on the night bus, as if we had our name tattooed on our forehead. In June the BBC and other media reported that PimEyes could be abused by stalkers. But the search engine can also expose sex workers, make so-called revenge porn more easily accessible or be used by the police to subsequently identify participants in a protest.

Like Clearview AI for all

The case is reminiscent of the scandal surrounding the US start-up firm Clearview AI — the difference being that PimEyes offers its biometric search not only to public authorities, but to everybody. At the same time, large tech companies such as IBM are publicly speaking out against facial recognition and are stopping cooperation with the police.

We demonstrated the capabilities of the software to German members of parliament, using their own photos. They recognise an enormous potential for abuse in PimEyes. Instagram and YouTube, whose content appears on PimEyes, want to take legal action against the search engine as a consequence of our investigation. With data protection experts warning of a large-scale violation of the European General Data Protection Regulation (GDPR), PimEyes risks heavy fines.

Dylan sees PimEyes as a danger. „This search engine can out people,“ he says. He himself has come out, even in his job as a banker. But he knows many homosexual colleagues who have not. „Especially in my profession, many people still stick to conservative values,“ says Dylan. „I know people who have a double life, living in a heterosexual marriage and secretly seeking protection in the LGBT community.“ He suspects that there may be photos of him, too, that he does not want to be attached with in public, such as from Christopher Street Day.

Dylan uses an alias on Instagram and Twitter, where he is posting about the struggle for LGBT rights and anti-racism. Professional contacts do not know his aliases, he says. The face search could change that, against his will. PimEyes, according to Dylan, could cause a lot of damage to people. He asked us to change his name for the article.

So far, the search engine only returns two results for Dylan’s face. But that could quickly become more, as the Polish company behind PimEyes keeps scanning the Internet for photos.

900 million faces, 1 terabyte of photos per day

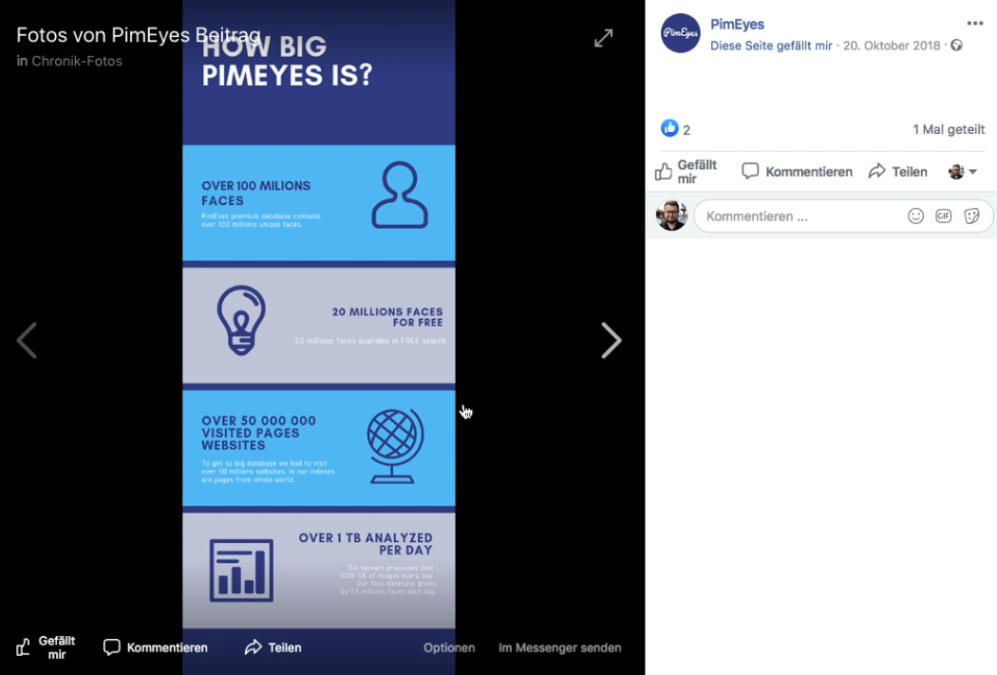

The Facebook page of PimEyes is no longer available, but in a post from 2018 PimEyes boasts huge numbers: It claims that every day more than 1 terabyte of photos are analysed and that the database contains the biometric data of more than 100 million faces. That number is growing rapidly: one year later, 500 million faces have been analysed, according to a document from the Polish Agency for Enterprise Development. By April 2020, according to the Pimeyes website, this figure has risen to 900 million faces. That would be more than the population of Europe and Russia combined.

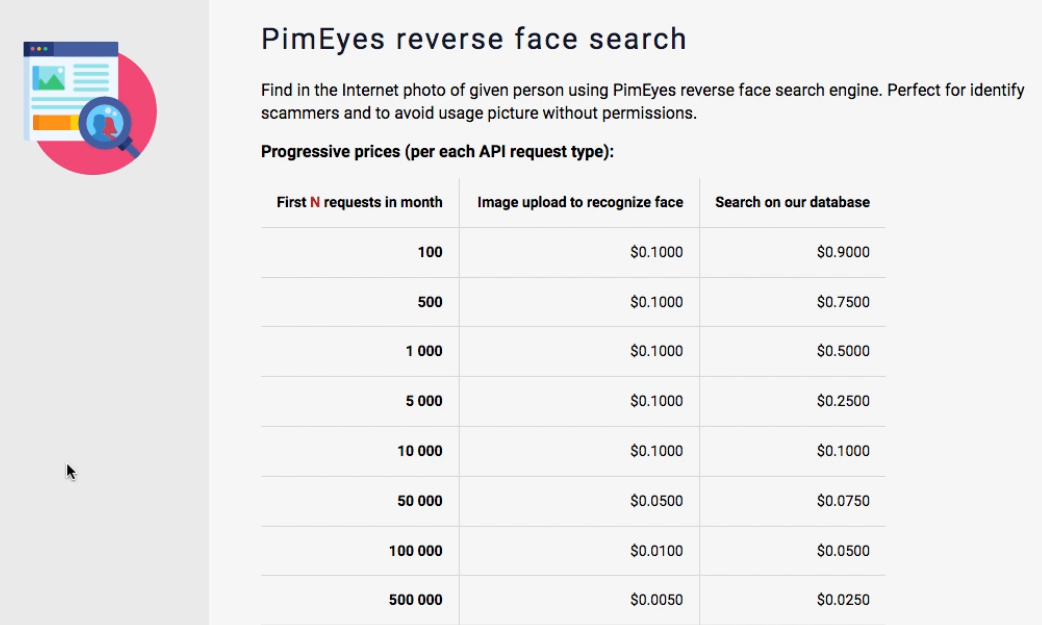

A feature for developers reveals what the operators dream of: there is a large discount if a customer wants to carry out 100 million face searches per month.

Shortly after asking PimEyes about the Facebook post with these numbers, the post is suddenly offline. The more we confront PimEyes with questions, the more the company contradicts itself. Questionable passages from their website are changed several times during our investigation or taken offline.

PimEyes poses as a friendly helper

It is no coincidence that PimEyes delivers much more than Google image search. Technologically, a biometric search would not be a problem for Google, but privacy laws are an obstacle. Facebook, too, has had a history of failed attempts to apply recognition software on its users. By now, facial recognition is available to Facebook users, but it is switched off by default.

The GDPR states that the „processing of biometric data for the purpose of uniquely identifying a natural person shall be prohibited“. PimEyes argues: the search engine is not about identifying a person. Users should solely upload their own faces.

Thus PimEyes does not present itself as a threat to privacy, but as a helper. „Upload your photo and find where your face image appears online“, it says on the homepage. „Start protecting your privacy“.

These sentences are new. They only appeared on the website after we sent the company a list of questions. Among other things, we wanted to know what PimEyes was doing about the possible abuse of its technology by stalkers. The international debate over the potential for abuse of PimEyes began with an article on the blog One Zero in June 2020.

The company apparently wants to avoid the topic. And so the search engine has recently claimed to be a tool for digital self-defense, so that for example users can detect possible fake profiles of themselves.

Photos of Meghan, Duchess of Sussex

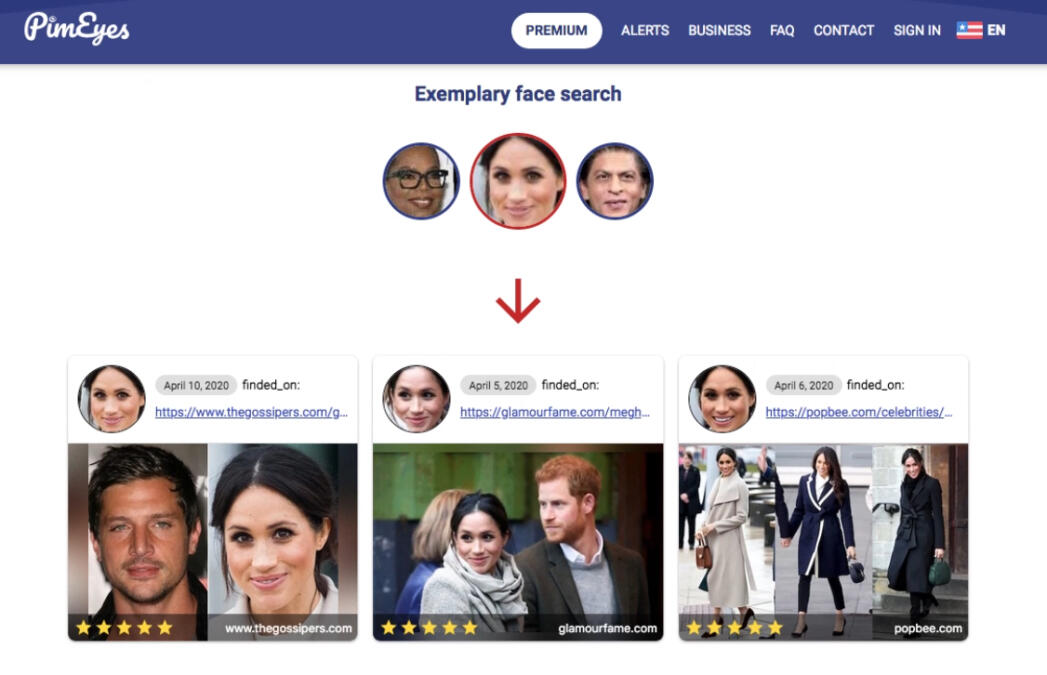

PimEyes’ claim that it wants to empower people’s privacy is coming under scrutiny. Sure, the firm may ask users only to upload their own image. Nevertheless, the biometric database already contains data of several hundred million analysed photos. Not even PimEyes seems to seriously assume that visitors are only looking for their own face. The operators have repeatedly encouraged their users to upload pictures of strangers.

On its home page, PimEyes recently suggested using the software to search for pictures of famous people. At the end of June, it showed photos of talk show host Oprah Winfrey or Meghan, Duchess of Sussex.

The operators appear to be aware that this could now be a problem. Old traces that do not fit the new privacy-friendly narrative have been hastily erased. Also missing is a YouTube advertising clip showing a search for pictures of actor Johnny Depp.

„In the past, Pimeyes made it possible to search for faces of public figures“, the company admits when asked. In an e‑mail the operators claim that their service can now only be used to upload photos of their own faces.

This decision was apparently taken at very short notice by Łukasz Kowalczyk and Denis Tatina, the people behind PimEyes. The graduates of the University of Science and Technology of Wroclaw started PimEyes as their hobby project. In addition to that, they ran another service called Catfished. The website for this service literally inspired users to misuse the technology.

„Use the power of AI and facial recognition to find friends and relatives on the Internet,“ it stated. The operators suggested entering photos of profiles from dating platforms into the search engine. „Use face recognition to find out more about your crush.“ This likely sounds appealing — to stalkers.

25 alerts for allegedly only one face

Today, Kowalczyk and Tatina claim that Catfished is a „dead project, developed not for EU countries, that was never really finished nor published“. Catfished had a privacy policy, which was managed by PimEyes as administrator of the website and which referred to the GDPR, which means it was clearly intended for EU users.

Like PimEyes, Catfished was also still functional until recently. We have successfully managed to search for a face with this service. Catfished was intended to be used and spread online. The website disappeared from the web only after a request for comment by netzpolitik.org. This and further cleanup work cannot hide the inconsistencies behind PimEyes.

For example, if you subscribe to the firm’s premium plan, you can set an email alert as soon as the software detects new matches with an uploaded face. Strangely enough, premium customers can not only set an alert for their own face, but for up to 25 different faces.

PimEyes justifies this by saying that multiple uploaded photos improve the accuracy of the matches. „One from the beach, one with glasses etc.,“ the company writes to us.

A glance at the program interface reveals that this answer is dubious: Even for a single alert, several photos can be uploaded to increase the accuracy of the hit. Thus, the other 24 alerts would be useless for the customer’s own face.

Discount for mass queries

How does PimEyes make money? The premium subscription of the search engine costs between 16 and 23 euros per month. For premium customers, among other things, links to the images found are displayed. According to the company, PimEyes currently has only about 350 premium subscribers. Kowalczyk and Tatina wouldn’t have made much money with that yet.

But PimEyes advertises another feature that could be the key to high revenues. At the same time, the feature raises massive doubts that the search engine is about protecting one’s privacy: It is a payment model for mass searches.

Kowalczyk and Tatina say that this offer is hardly in demand. However, it basically invites large-scale abuse of the technology. Instead of painstakingly uploading individual photos to the PimEyes website, a few lines of code can handle thousands of queries. At the same time, developers can integrate the search engine into their own applications, which PimEyes has no control over.

The company initially charges around one euro per search via the programming interface. With mass searches, it becomes significantly cheaper. If someone wants to query 100 million images per month, a single search won’t even cost a cent. But what kind of person searches his or her own face 100 million times a month?

Search results from porn sites

Even with the positive reviews presented on the website, appearances are deceptive. Three testimonials from alleged users are intended to illustrate how helpful the search engine is. „Thanks to PimEyes, I found a profile on a dating site that pretended to be me,“ reports one Alister Richards. She enthuses: „Tools like this improve our online privacy.“

There is a photo next to the testimonial. But if you search for the photo of the alleged PimEyes user with PimEyes, something surprising happens: Alister Richards also appears on several other websites, each time with a different name and showering another online product with praise. It is the same with the other testimonials. Are they all fake? Kowalczyk and Tatina confirm that the photos are not real. But allegedly, the quotes are. „Their authors wanted to be anonymous.“

However, PimEyes itself, in particular, could compromise anonymity. The search engine even shows photos from porn sites. In a meanwhile deleted advertising clip, PimEyes advertised the search on „adult sites“ as a premium feature, obviously trying to make money with it. Today the results of porn sites mix with the other search results.

PimEyes weaponizes digital violence

Łukasz Kowalczyk and Denis Tatina appear to accept that sex workers are involuntarily outed. PimEyes is also a threat to those affected by voyeurism and so-called revenge pornography, i.e. recordings made or distributed without consent. The perpetrators want to hurt and degrade women, in particular, by making the recordings accessible to as many eyes as possible. The search engine plays right into their hands.

In August 2019, Tom accidentally finds nude photos of his wife Nicole on the Internet during a reverse image search in another search engine: 190 different photos, plus eight illegally published videos. In order to hunt down the perpetrator(s), Tom created a spreadsheet and collected around 2,700 links to the locations of the photos. The online magazine VICE reported on this case, their names are being changed to protect the couple.

„PimEyes is an absolute catastrophe,“ Tom says today in an interview with netzpolitik.org. „I did a test search on PimEyes and immediately found 61 search results with illegally published nude photos of my wife.“

Faced with the problem, PimEyes writes: „Our tool helps to fight revenge porn“. Tom is not convinced. „What’s the point of finding the pictures if you can’t completely remove them from the Internet?“

It is a problem in itself that porn sites do not consistently delete problematic images. But even PimEyes does not reliably remove the search results, even when affected people demand it. For this purpose PimEyes offers its own notification form. But when we try to use it, there is no apparent follow-up.

In June, we asked PimEyes to remove a photo of the author of this article from the search results, and shortly afterwards we received a confirmation email. But nothing was deleted. We identify ourselves as journalists and ask why the search result is still visible. „This function is working properly and is well tested“, PimEyes answers. „We have no complaints from users about it.“

Tech companies defend themselves against PimEyes

Anke Domscheit-Berg, spokeswoman for digital politics for the party The Left in the Bundestag, describes PimEyes as „highly dangerous“ in an interview with netzpolitik.org. Women who want to move anonymously in public spaces could be more easily identified and exposed to harassment, says Domscheit-Berg.

„I find the idea extremely disturbing that every creep on the subway can identify me via a mobile phone photo and find my place of residence and work without major hurdles.“ Stalkers and pedophiles could also locate the surroundings or whereabouts of their victims.

A search engine like PimEyes becomes powerful when it can analyse photos from social media. And indeed: content from Instagram, YouTube, TikTok, Twitter and vKontakte also appear on PimEyes. We made screenshots of these search results, including direct links. When we asked the company about it, the answer was: „We don’t scrape any social media sites“.

We asked the Instagram parent company Facebook to comment. „Scraping people’s information from Instagram is a clear violation of our policies, and an abuse of our platform,“ wrote a spokesperson. Facebook claims to have immediately banned all accounts associated with PimEyes and their founders on Facebook and Instagram. „We’ve sent them a legal demand to stop accessing any data, photos, or videos from our services.“

YouTube is also claiming a violation of its terms of service. „Accordingly, we will send a cease and desist letter to PimEyes detailing the terms of service violations,“ said a spokesperson. TikTok is planning legal action, a spokeswoman says; Twitter reserves the right to take legal action. vKontakte has not yet answered our questions.

PimEyes is a reminder of the scandal surrounding Clearview

A possible reason for the strong reactions: A similar case has already occurred. The start-up company Clearview AI had also analysed a mass of faces from the Internet for a biometric database. But Clearview AI was not openly available on the web for everyone. According to an investigation by the news website Buzzfeed News, the customers of the company included companies, governments and police authorities. Earlier this year, Google, Facebook, and Twitter had opposed it.

The widespread opposition to Clearview AI was perceived as groundbreaking by data protectionists and should have been a warning to PimEyes. Biometric data is considered sensitive. Anyone who misuses such data risks steep fines and may even have to pay compensation to those affected.

The data protection commissioner of Baden-Württemberg, Stefan Brink, is particularly critical of PimEyes. According to the GDPR, biometric data belong to the so-called special categories of personal data. „This is the most worthy of protection we know of,“ says Brink. According to him, the company would only be allowed to process the biometric data if it had the express consent of the persons concerned. This means that with an alleged 900 million faces in the database, PimEyes would need to have 900 million consents.

When we confront Kowalczyk and Tatina with the suspicion of violating laws on data protection, they argue that the GDPR prohibits the processing of biometric data for the purpose of unambiguous identification, with a few exceptions such as the explicit consent of the user. But the founders argue that because PimEyes does not assign names to faces, there is no legal issue. Their service, according to the PimEyes operators, does not differ from other search engines.

„Violation of the right to one’s own image“

Since recently, users have to check a box with a mouse click and confirm that they are really uploading only their own face before submitting a query. The firm evidently does not like to remember a time when PimEyes suggested entering Duchess Meghan into the search engine.

PimEyes wants to shift the responsibility for the possible abuse of the search engine on the users — a change of track that appears to have taken place right after our press enquiry.

The IT lawyer Sabine Sobola strongly advises potential users of PimEyes against uploading photos of third parties to the search engine. „If consent is not given, this is a violation of the right to one’s own picture“, she says, „and this will result in claims for injunction and damages if the person depicted should become aware of this“. In other words, anyone uploading a photo of their neighbor to PimEyes could get into legal trouble.

The risk of getting caught, however, remains low, and the only people who could provide proof of a violation would be Kowalczyk and Tatina themselves. According to the founders, photos are stored for 48 hours before potential evidence is deleted. Until recently this was supposedly done immediately.

PimEyes identified 93 of 94 members of parliament

For our investigation we want to find out if it is really possible to use PimEyes on a large scale. We are writing a software for the programming interface offered by PimEyes. And we learned that: If you pay, you can apparently do what you want with PimEyes.

For the experiment we need a number of test persons, but we do not want to upload private photos under any circumstances. Therefore we decide to use pictures of members of the Bundestag. On a randomly chosen day we take screenshots of all members of parliament at the speaker’s desk from parliamentary television: a total of 94 politicians. These are not perfect portrait photos. In many cases the face is only visible at a side angle. To make it even harder for the search engine, we shrink the individual heads to the size of stamps.

Using the programming interface we match the 94 faces of the politicians with the database of PimEyes. Our automated queries take less than five minutes. The search engine spits out more than 2,500 links to image files. For the majority of the photos, the software claims to have detected at least 90% similarity. And indeed, in 93 cases PimEyes had found additional photos of the same person. Only in one case all search results were wrong.

Instead of searching for 94 members of the Bundestag, we could have let PimEyes search for the faces of hundreds or thousands of people. And instead of using footage from parliamentary television, we could have fed the service images from surveillance cameras.

It is possible that this is already happening. After all, law enforcement agencies are also interested in facial recognition, and PimEyes has landed an early coup in this area.

Surveillance technology for the state

PimEyes has been integrated into the software Paliscope by the Swedish company Safer Society Group since 2018. The application is designed to help investigators to combine data from different sources. The Safer Society Group is well connected, and its customers include the European police agency Europol.

A company spokesperson does not want to say whether law enforcement agencies within the EU or even Germany use Paliscope — and thus possibly PimEyes. He doesn’t even go into specific questions, but says: „We would never collaborate with any partner that is breaking the law.“

PimEyes was still publicly trying to win law enforcement agencies as new clients at the beginning of June. The FAQ on the PimEyes website stated that investigators could use the search engine to find matching faces on the so-called darknet. But two days after One Zero reported this, the text disappeared.

Kowalczyk and Tatina confirm that they removed it. But only because law enforcement allegedly didn’t show interest in PimEyes. An explanation that is particularly surprising because the operators have been working in the background for at least four months on their own product targeted at law enforcement agencies. Apparently they have even founded another company for this: Faceware AI.

A mailbox in the USA

According to Kowalczyk and Tatina, Faceware AI is still under construction. The two wanted to develop custom software for law enforcement agencies and use it to find missing children, they wrote to us in an email. However, according to them, Faceware AI has nothing to do with PimEyes.

On the professional networking platform LinkedIn the company claims that Faceware AI designs software for facial recognition and machine learning. Technologies that sound very much like what the founders worked on for years in Wroclaw under the name PimEyes.

On LinkedIn there is one other Faceware AI employee who, according to his profile, is supposed to take over the distribution of the new service beyond Europe. The founders claim that Faceware AI’s headquarters are in the US state of Rhode Island. However, there is no company by that name in the Rhode Island commercial register.

But in early April, just one day before Łukasz Kowalczyk and Denis Tatina registered Faceware AI’s internet domain, someone in the state of Delaware registered a company named Faceware Inc. using a service provider that offers anonymity for the directors. Delaware is known as a tax haven and home to numerous offshore companies. We have asked Kowalczyk and Tatina several times whether Faceware Inc. is their company. But the PimEyes operators do not want to comment on this.

Facial recognition by the police

If Faceware AI was a functional tool, many investigating authorities might be interested. In the United States, many police authorities are already working with Amazon. The company offers „Amazon Rekognition“, a facial recognition software.

Civil rights organisations are calling for a ban on facial recognition software, among other things because Rekognition and similar tools have been shown to have a racist bias. Its use could wrongly lead to investigations of People of Color. Amazon recently announced that it will not give the police access to „Rekognition“ for a year.

Data protection may be stricter in Europe, but for the police, facial recognition has long been available in Germany. The police database INPOL, for example, stores around 3.65 million people, and the number of requests to the Federal Criminal Police Office’s facial recognition system is constantly increasing, as the Federal Ministry of the Interior announced in April in its response to a parliamentary inquiry. In 2019 alone, investigators made 54,000 queries there.

PimEyes would probably only be allowed to cooperate with German authorities if Kowalczyk and Tatina had established their database by legal means. „Such cooperation would need to have a clear legal basis,“ says data protectionist Stefan Brink.

Arrested for showing up at a protest

Brink warns of the consequences that the use of facial recognition on a large scale could have. „What is being done here has the potential to change the way we behave and interact in society,“ he says. „After all, it means nothing less than that we lose anonymity when we move around in public spaces, and that we can in principle be identified at any time and in any place.“

MP Anke Domscheit-Berg warns of a threat to freedom of speech from facial recognition search engines . „Intelligence services and other state agencies could identify protestors and investigate their relationships with others.“ If people had to fear that, they would rather stay away from protests.

How dangerous PimEyes could be when used against protests becomes clear when we upload a photo of Domscheit-Berg herself to the search engine. It shows the politician at a protest, against mass surveillance, of all things. After four and a half seconds, the software shows us about 60 pictures which it suspects to show Domscheit-Berg.

Three years ago, the Hamburg police demonstrated that facial recognition technology could really be used to identify participants in protests. In connection with the protests against the G20 summit, they collected extensive image and video material. Eventually, a special commission ran software on more than 30,000 recordings, searching for faces.

Facial recognition is already a reality in everyday life in Moscow. In the Russian capital, where participants in political protests are repeatedly arrested, software from a company called FindFace analyses recordings from public surveillance cameras. „Moscow is safe with FindFace,“ the company praises itself on its website.

Earlier this year, German Interior Minister Horst Seehofer reportedly attempted to introduce automated facial recognition in public places with the new Federal Police Law. But meeting stiff resistance, Seehofer withdrew the proposal. But later statements, especially from CDU/CSU politicians, hint that such a move might just be postponed.

Even the White Paper on the AI strategy of the EU Commission, in which political hopes had been placed, does not provide for a separate regulation of technologies for facial recognition. Despite initial considerations, the Commission did not impose a moratorium in order to gain time for risk assessment. This puts pressure on German federal politicians to react themselves.

The CDU/CSU’s digital policy spokesperson calls for regulation

Tankred Schipanski, the spokesman for digital politics of the CDU/CSU in the Bundestag, considers regulation to be necessary following our investigation into PimEyes. „If this does not succeed at the EU level in the near future, we will have to take action here as national legislators,“ he told netzpolitik.org. Schipanski describes the current situation as untenable.

„The potential for abuse of such an application is enormous,“ says Jens Zimmermann, spokesman for digital politics for the SPD parliamentary group. He calls for a close examination of whether the existing legal regulations offer sufficient protection. „Do we really want to live in a society where anonymity in public spaces is de facto no longer possible?“

Anke Domscheit-Berg has gone one step further and, as a result of our investigation, has contacted the Federal Data Protection Commissioner Ulrich Kelber in her function as a member of parliament. She says: „If this app does not have a legal basis, as required by the GDPR, appropriate sanctions must therefore be imposed and the distribution of the app must be stopped as soon as possible“.

Through his spokesperson, Kelber announced that he would contact the responsible Polish data protection supervisory authority, Urzad Ochrony Danych Osobowych (UODO), to obtain further information.

Persons affected by data protection violations have the right to compensation

UODO left an enquiry from netzpolitik.org unanswered. It remains unclear for now, whether the Polish authority even knows about PimEyes. If the watchdog finds that Kowalczyk and Tatina have violated the GDPR, the founders may be facing a fine.

When UODO imposed a fine on a company for the first time last year, the firm was supposed to pay around 220,000 euros. At that time, the fine concerned unfulfilled information obligations, affecting about six million people. The database of Kowalczyk and Tatina is said to contain 900 million different faces.

PimEyes may have its headquarters in Poland, UODO may be responsible for the company. However, those affected in Germany who want to take action against the service can contact their respective state authority for data protection. This authority would then have to pursue the case on their behalf.

Stefan Brink considers the search engine a classic case of infringement of the right to one’s own image, as he says. „This virtually screams for civil damages claims.“

For those affected, the GDPR provides this in the case of data protection violations.

For Dylan, the banker from Frankfurt am Main, it is clear: He simply does not want his face to be found on PimEyes. It is likely that there are a lot of photos of strangers, where he is shown in the background by chance. „The only way to protect yourself from such a search engine would be to walk around in disguise,“ he says.

Dylan has also used PimEyes’ notification form to ask the company to delete his photo showing him at the LGBT party on a boat from the search results. A little later, confirmation was sent by email.

But the allegedly deleted image motif is still in the search results days later.