Something in the millions – that is a number that sticks when you read reports about sexualized violence against children. Numbers are news, and when it comes to serious crimes, their news value is high. But numbers have to be correct and need to be contextualised, which often proves challenging for news media and politicians.

This is particularly true in an ongoing debate among politicians, child protectors, civil rights organizations and IT experts over a new proposal by the European Commission on how to fight child abuse. It stipulates that online providers must screen private chats if ordered to do so. This chat control is intended to detect sexualized violence against children.

The plan is supported by figures. In its draft, for example, the European Commission writes that in 2021, there were almost 30 million reports of child sexual abuse on the Internet. Yet this claim stems from a narrow interpretation of the data. To discuss the pros and cons of chat control, a closer look is needed.

This article has also been published in German.

After all, the huge number does not mean that there are almost 30 million potential victims. In fact, this number is not suitable to even approximate that. Without further explanation, such numbers create a grossly distorted picture of the known extent of sexualized violence against minors online. Here comes an overview of what’s really behind the numbers – and what’s not.

Of abuse and sexualized violence

„Abuse“ is a common term for sexualized violence against minors. It can be found in official documents, laws and everyday language. However, many victims advise that it is better to speak of sexualized violence. The reason: the term „abuse“ suggests that there could also be a use of people; but people are not objects.

The German federal government has appointed a „commissioner for questions of sexual child abuse“. On an info page, she explains the criticism of the term „abuse“, but also names a merit. According to this, it is „precisely the abuse of the trust of affected children or adolescents“ that constitutes the essence of these acts.

An U.S. organization called NCMEC (National Center for Missing and Exploited Children) also speaks of abuse. It is the world’s most important source of figures on sexualized violence against minors on the Internet. The figure that made it into the EU Commission’s draft law also comes from NCMEC: almost 30 million (29.3 million). That’s how many reports the centre received in 2021 through its CyberTipline.

29.3 million: Most reports come from Facebook

NCMEC collects tips about recordings of sexual violence against children around the world. It is a non-profit organization, its money comes partly from the US Department of Justice, partly from donors. NCMEC has not responded to press inquiries from netzpolitik.org. NCMEC receives reports primarily from providers of large online platforms, but users can also fill out an online form manually. If reports can be assigned to other countries, NCMEC informs the authorities there.

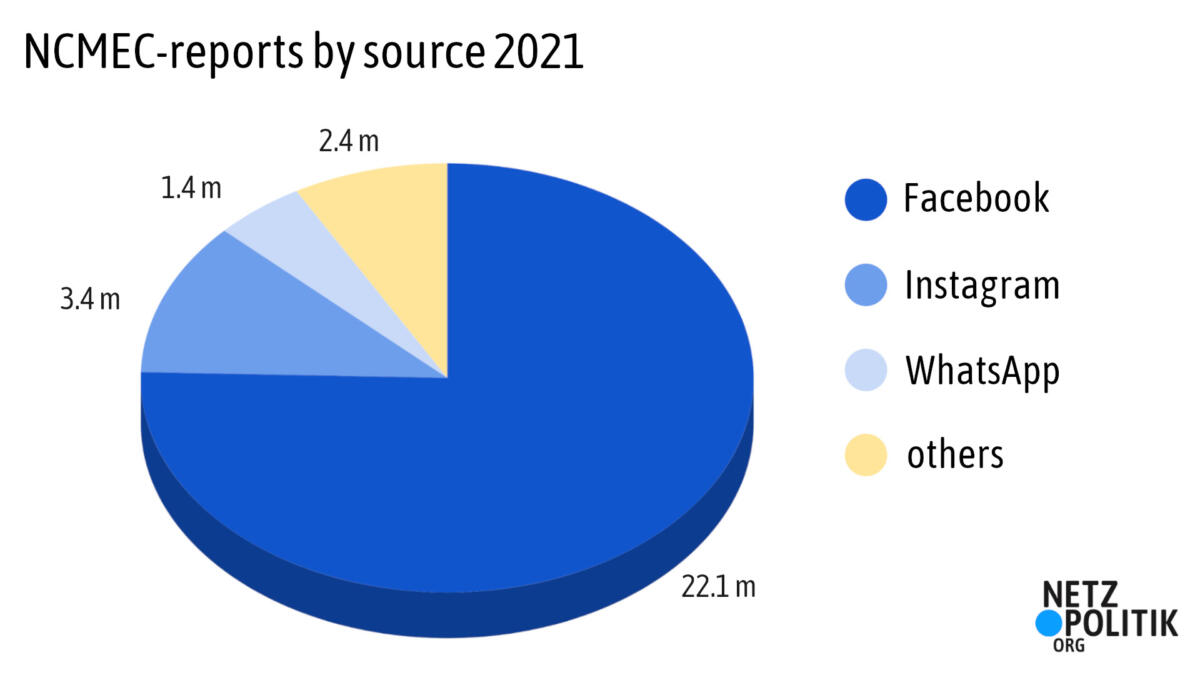

NCMEC reports are not representative of what is happening on the Internet. The majority of reports come from just one source. 75 percent of all NCMEC reports from 2021 come from Facebook. Instagram and WhatsApp also contribute many reports. Together, the three providers belong to Meta. Their reports account for about 92 percent of all NCMEC reports.

NCMEC also publishes a breakdown of reports by country. According to this, most reports last year came from India (4.7 million), the Philippines (3.2 million) and Pakistan (2 million). Germany was assigned about 79,700 reports, and the U.S. about 716,000.

However, NCMEC reports from the previous year include an even larger number. This is because a single report can contain several photos or videos. That means there are even more suspicious recordings behind the many reports. And this number is an important intermediate step.

85 million: A large part of the recordings are duplicates

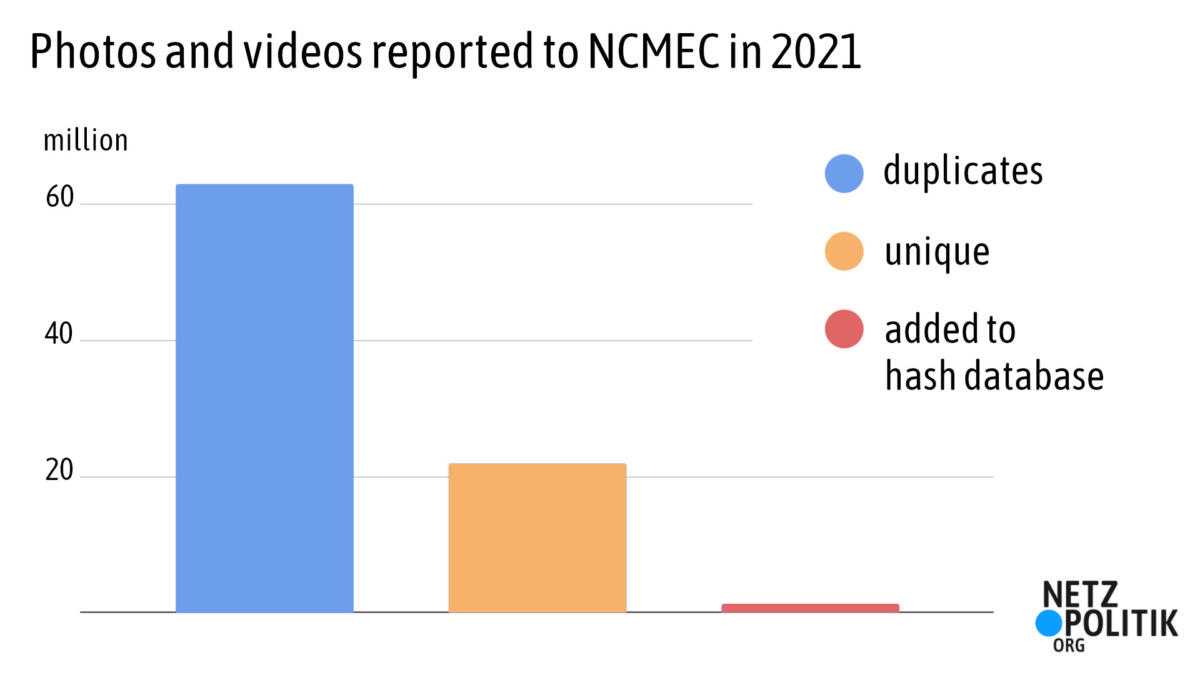

85 million, that’s the highest number from NCMEC’s annual report. That’s how many photos and videos were reported to the NCMEC in 2021, always with suspicions of sexual violence against minors. A very large portion of these recordings are duplicates. That is, they are not different images, but the same ones that have been reported again and again. Without duplicates, that leaves 22 million unique recordings out of the 85 million reported.

As the biggest contributor, Facebook has provided an internal analysis. For this, Facebook examined its own NCMEC reports in a limited period of time. It was two months in the fall of 2020, during which time just six different videos were responsible for half of all NCMEC reports by Facebook – videos that were shared over and over again. Ninety percent of the reported content was identical or similar to already known material, according to Facebook.

So NCMEC’s much-cited figures don’t really describe the extent of recordings of sexualized violence against children online. They rather describe how often Facebook discovers copies of already known recordings. That, too, is relevant. Even if the same recording is shared over and over again: Each re-sharing is a crime in its own right. „For those affected, this is unbearable because they know that the violence they have experienced is being traded and shared over and over again,“ explains Kerstin Claus, child abuse commissioner of the German government.

However, anyone who wants to put an exact figure on this crime will have to keep looking for a suitable number. The figure of 22 million unique recordings is also misleading. NCMEC keeps analysing.

5 million: The database of illegal recordings

Not all unique recordings reported to NCMEC show violence. For example, NCMEC also receives cases of so-called sexting, in which teenagers consensually send each other nude photos of themselves. This is not sexualized violence. Another example: Perpetrators often take non-sexualised photos of their victims. These recordings are not punishable by law.

NCMEC investigates which photos really show criminal content. In doing so, the analysts focus on recordings from the U.S., according to a report by the EU Commission. „This service cannot be provided for non-US reports due to the much higher volumes,“ the report says. In other words, it’s not clear what percentage of the 22 million unique recordings are in fact harmless.

In the end, there is a number of recordings that NCMEC clearly classifies as illegal. They’re also called „CSAM“ which stands for „child sexual abuse material“. NCMEC feeds such verified cases of CSAM into a database. In 2021, that number was 1.4 million.

NCMEC’s database does not consist of the images themselves, but of hashes. To the human eye, hashes are just a jumble of characters. To software, a hash is like a fingerprint that can be used to recognize an image. In this way, NCMEC builds a filter against CSAM: Thanks to the hashes, newly discovered recordings can be quickly compared with the database. Online providers such as Facebook can use this database themselves.

Currently, the hash database includes about 5 million unique recordings. NCMEC has collected these recordings over many years. Hashes from the British Internet Watch Foundation were also included. Five million verified recordings of sexualized violence – this number is more suitable to describe the extent of the problem. But the figure still does not reveal approximately how many minors are affected. That’s because the hash database doesn’t say how many images show the same person.

4,260 times the NCMEC has notified investigative authorities

Probably the most accurate number NCMEC provides about individual victims is 4,260. That’s how many potential victims NCMEC newly identified and then notified law enforcement in 2021. Why is this number so small?

Sexual violence against minors often happens over a long period of time. This can result in many different photos and videos. Often, old recordings are shared over and over again for years. An important part of NCMEC’s work is therefore „triage“. The term is mostly known from medicine. When there are a lot of injured or sick people, doctors have to decide quickly who gets help first. NCMEC takes a similar approach. It wants to ensure the „most urgent cases, those where a child may be suffering ongoing abuse, get immediate attention“.

NCMEC analysts therefore analyse newly discovered recordings – apparently only for cases from the United States, according to the EU report. The analysts try to identify the victims or at least the alleged crime scene. They use data shared by providers, such as IP addresses. In 2021, NCMEC detected new cases involving 4,260 minors.

Reports from other countries are not investigated at NCMEC, but by local authorities. Many cases from the EU, for example, end up at the European police agency Europol. It investigates tips for 19 EU member states and Norway, as a Europol representative explained at a conference in the summer of 2022.

Larger EU member states do this with their own authorities; in Germany, for example, it is the Bundeskriminalamt (BKA). In response to a request from netzpolitik.org, the BKA says: „The BKA currently receives most tips about files with child pornography content from NCMEC.“ The BKA then distributes these cases to the authorities of the federal states (LKA).

Many millions: NCMEC figures in the news

News media repeatedly fail to correctly classify NCMEC numbers. The number of NCMEC reports does not describe the number of detected recordings of sexualized violence. But that is exactly what the Guardian claimed, writing of „29.3 million depictions of child abuse“.

The Stuttgarter Zeitung, a local German newspaper, interpreted NCMEC reports differently and claimed there were almost 22 million „proceedings initiated worldwide“ in 2020. But a report is not a procedure.

The German Süddeutsche Zeitung magazine wrote, „70 million“ images and videos were reported to NCMEC in 2019, not mentioning that in many cases identical recordings were reported again and again“. But there is a difference between the same image a million times – and a million images.

An overly simplified approach to the figures has also been with us so far. In May, for example, we wrote that the NCMEC counted billions of photos of missing children over the past 37 years. Missing here was a critical categorization that the hash database of unique depictions includes only 5 million images. What sticks is a murmur about many millions of children affected, even if the numbers cited describe something else.

One out of seven? – estimations

So how many minors are affected by sexualized violence? The need for an answer is obviously high – which may explain why NCMEC numbers are so often misclassified. Unfortunately, none of the NCMEC numbers do a good job of answering that question.

One approximation is provided by studies that do not rely solely on cases of recordings distributed online. In 2016, researchers at the University of Ulm presented a paper. The studies they evaluated for that paper are all about the question of what percentage of people experienced sexual violence as minors.

According to the paper, the figures vary considerably depending on the study: from single-digit percentages to about 20 percent. A similar estimate has been published by the German federal government’s commissioner for abuse. According to this, about one in seven or one in eight adults in Germany has experienced sexualized violence as a minor.

This contrasts with a small number of known cases. In 2021, 15,507 cases of „sexual abuse of children“ were reported in Germany, according to the BKA. In Germany, a child is defined as a person under the age of 14. The NCMEC, on the other hand, considers all minors below 18 years as „child“. This unclear definition makes it even harder to compare figures from different states.

This is what the figures mean for chat control

Despite millions of reports – only a small fraction of the discovered recordings of sexualized violence lead to potential victims and perpetrators. German abuse commissioner Claus writes: „Even if this number seems small at first: This is where investigations must be carried out most urgently and consistently“. According to Claus, current assaults and violence are behind this. Children must be identified and protected in a very concrete way, Claus tells netzpolitik.org.

Cathrin Bauer-Bulst deals with the topic for the EU Commission and argues similarly. At the „Child Safety Online Conference 2022″, she defended the EU’s proposed legislation on chat control. First and foremost, child sexual abuse is an offline phenomenon, she said. The perpetrators are mainly not strangers, but people from the child’s environment, such as parents or educators.

However, most cases remain undiscovered, for example because children are too young to talk about it or because they accept the abuse as part of their lives. According to Bauer-Bulst, the digital space is a key factor because many perpetrators network there, exchange recordings and encourage further acts. According to this argumentation, the planned screening of millions and millions of online messages is a detour: investigators want to at least somehow find clues to new, potential victims.

EU Commission expects better reports

Kerstin Claus highlights another aspect of chat control. The EU Commission also wants providers to look for sexual contact between adults and minors. This is called cybergrooming. For investigative success in this area, it is necessary to „at least discuss possible legal and technical approaches in an unbiased manner.“

A spokeswoman for the EU Commission writes to netzpolitik.org that currently about 75 percent of NCMEC reports can be used in the EU. The rest is not „actionable“, for example, because information such as an IP address is missing or can no longer be linked to a person. According to the EU Commission’s plans, chat control is not intended to increase the volume of reports. „We don’t expect more reports, but reports of better quality that are more usable for law enforcement in the EU,“ the spokeswoman writes.

Numerous experts from the fields of IT, civil rights and child protection warn against chat control. Even ministers of the German government reject it. Chat control can lead to innocent people being suspected due to erroneous hits. This is because automatic recognition systems make mistakes. Additionally, chat control would violate fundamental rights, such as the confidentiality of communications.